LAST UPDATED July 26, 2022

As long as web application firewalls (WAFs) have existed, security teams have struggled with tuning and maintaining WAF signatures and rulesets. It is thankless, neverending work, and even in the best cases, prone to frequent false positives and false negatives. Yet even though it is one of the most long-standing complaints of legacy WAFs, it is a problem that never seems to go away.

So why has the industry been stuck fighting the same problem for so long? Is it something that can be fixed, or is the pain of rule management the unavoidable “death and taxes” of AppSec? At ThreatX, we are focused on finally making this problem go away by providing a platform that makes security much stronger while getting security teams off the rule management treadmill.

So let’s take a look below the surface to see why legacy rules are so problematic and what we can do about it.

A Common Problem Based on Common DNA

Legacy WAFs tend to suffer from the same problems when it comes to rules because they all fundamentally work the same way. In fact, many of the most popular commercial WAFs rely on the same underlying rules defined by ModSecurity. ModSecurity is a well-known open-source WAF, and its Core Rule Set (CRS) contains more than 17,000 regular expression-based rules. Each WAF vendor may customize and tune these modsec rules to their liking, but under the hood, they are virtually identical.

This has led to an entire industry of WAFs where the core detection engines are all based on regex matching rules. And in most cases, WAFs require a LOT of these rules. And while rules and signatures are not inherently bad, a regex-centered view of the world can certainly lead to a wide range of challenges including …

False Positives and Negatives

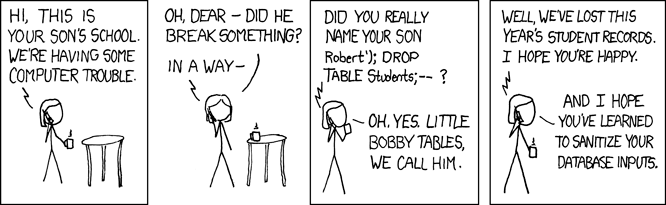

Regex rules look for specific string matches that match to a known threat. For example, this might be a pattern of a SQL injection such as the user entering a UNION and SELECT statement for usernames and passwords that reside in an application’s database. And while regex rules can look for these statements, every use of “union” and “select” isn’t necessarily a sign of evil. For example, I’ve recently talked to a prospect whose legacy WAF had blocked a user’s chat message for saying that he was going to “select all the users in my union.” In a similar situation, I’ve seen an online furniture retailer block prospective customers from viewing “drop leaf kitchen table” because the query included the “drop” and “table.” These are very simplified examples, but the same concept plays out over and over in far less obvious ways.

Of course, security teams never want to accidentally block valid users. So if a rule triggers a false positive, then the rule may be disabled or heavily scaled back. The change may quickly be applied throughout the site and other applications to avoid problems. If rules are applied too loosely, then the WAF fails to do its job, and organizations are exposed to serious risks.

This same Goldilocks exercise of trying to tune rules that are not too hot, and not too cold is a never-ending challenge. Every application is somewhat unique and will need rules tuned to the way it works. Applications will also naturally change as updates are applied, so rules that worked yesterday may not work today.

Heavily Dependent on Human Talent

The complexity of managing rules directly lands on security staff in a variety of ways. The first and most obvious way is that it takes effort and expertise in order to properly tune and manage rules. In my experience, it is incredibly common for an enterprise to have multiple full-time security engineers fully dedicated to maintaining their WAF rules. The work can quickly burn engineers out, meaning that organizations are always looking to find more talent to dedicate to what is a Sisyphean task.

WAFs also often require many rules working together to meet the unique needs of an application. This overarching logic is often built up by a senior security engineer with intimate knowledge of the application and the types of threats it faces. If this engineer leaves the company, the rule set will often be far too complex for a new team to understand. Once again, staff may be afraid to make changes out of fear of unintentionally breaking things. In this case, it is very common to see staff simply start over with vanilla policies and slowly rebuild the ruleset over time.

Challenges With Complex and Business Logic Attacks

Regex rules excel at seeing simple, discrete attack events such as the aforementioned SQLi, cross-site scripting, CSRF, and other granular OWASP Top 10 types of attacks. However, what happens if the attacker’s input isn’t obviously evil. Modern attackers have shifted their techniques, often staying low and slow to avoid triggering security controls. Attacks may be spread out over several coordinated steps. Traditional rules are built to see individual events. As an analogy, they are built to recognize individual atoms, but they do a very poor job of understanding chemistry. The complex, multi-step techniques seen in modern attacks require security tools that can see this big-picture “chemistry” view that understands how all the various pieces are interacting and related to one another.

Additionally, modern attackers heavily abuse the business logic of an application. These techniques use exposed application features in much the same way that valid users do. A credential stuffing attack is a classic example, where a botnet will enter leaked username and password combinations into a login page in order to try and find accounts that reuse those credentials. While the action is still malicious, there is no obvious malicious code that a regex rule could use to know that it is an attacker and not a valid user. Similarly, a legacy WAF would be almost powerless to distinguish a valid user buying a product versus a bot trying to lock up a site’s inventory by adding products to a shopping cart.

How ThreatX Enables a Simpler Approach to Rule Management

Unlike legacy WAFs, ThreatX is not built on ModSec or a regex detection engine. Instead, we use a hybrid detection model that is attacker-centric and continually integrates all perspectives and events into a risk-based enforcement engine. There are a lot of interesting terms in that statement, so let’s briefly unpack them.

Hybrid Detection

ThreatX natively blends a variety of detection methodologies into every analysis. This includes behavioral application profiling, attacker profiling, active interrogation and deception, and more. Most importantly, these are not a series of modules bolted together, where each module looks for only a specific type of attack. With ThreatX, all detection methods work together, and all methods are applied to all traffic, all the time.

For example, consider our previous example of a botnet that may try to lock up inventory by adding a limited edition product to shopping carts. ThreatX will interrogate each visitor in a way that will identify automated visitors from humans while remaining completely transparent to valid, human users. Likewise, by analyzing the normal behavior of the applications, ThreatX could identify that the bots were making direct API-based calls to quickly add products to carts instead of navigating and selecting products like a valid user.

All of this intelligence works automatically without the need to write and tune complex regular expression rules, or configure, manage, and tune multiple separate modules or blades.

Attacker-Centric Detection

In addition to detecting malicious actions, ThreatX goes to unmatched lengths to put the focus on the actual attackers, or as we call them, attacking entities. This is incredibly important in the context of modern attacks, which will often leverage thousands of bots that constantly rotate through IP addresses, user agents, and other traits. For example, if we again think of a credential stuffing attack, a botnet would spread the queries out over many bots, coming from many IP addresses. There would be no obvious way to distinguish a valid user from the bot.

In addition to the active interrogation techniques mentioned previously, ThreatX also uses a wide range of sophisticated fingerprinting techniques. For instance, advanced TLS fingerprinting can readily identify an attacking node even when it comes from another IP address. Instead of constantly being one step behind the attacker, ThreatX can use these techniques to proactively recognize every new incarnation of a bot. Once again, this gives us a way of stopping threats that would be virtually impossible using legacy WAF rules.

Risk-Based

Our risk-based decision engine is the heart of the ThreatX solution, and this is where all of the collective contexts come together. From our previous analogy, this is the central brain that understands chemistry. This engine not only integrates all of the various detection strategies but also tracks the cumulative risk based on events over time. This can see how multiple seemingly innocuous events fit together as part of a coordinated attack. We can track the development of an attack across multiple phases, beginning with the earliest reconnaissance. Additionally, this view of risk is always up to date and can rise and fall based on what’s happening minute to minute or from day to day. This lets organizations take automated action when the cumulative risk rises to a specific level. Not only would this type of analysis be virtually impossible with regex rules, it would also require extensive correlation and analysis outside of the WAF. With ThreatX, the correlation is real-time, continuous, and automated.

Hopefully, this gives you a useful introduction into how ThreatX differs from legacy WAFs, and how it can help your organization avoid many of the painful, time-consuming aspects of WAF rules. Of course, rules never completely go away. Again, every app is unique, and organizations will have their own specific needs and goals. However, with the right intelligence, rules don’t have to be painful, and they can be expressed in the natural logic of the business and the applications themselves, instead of thousands of curated regex rules.

For more details on the difference between WAF and WAAP, check out our recent webcast. To see for yourself how we can help you achieve better security with less effort, please sign up for a demo.